Practical writing on AI context management, LLM governance, policy enforcement, and enterprise AI architecture.

More tokens doesn't mean better control. Here's why bigger context windows are solving the wrong problem for enterprise AI teams.

When bad data gets into your model's working memory, the outputs get bad too. This is what context poisoning looks like in practice.

Ad hoc filtering doesn't scale. A policy layer that runs before the model does is the only approach that holds up under real usage.

Legal teams started the conversation. Now engineering teams are the ones responsible for making it work in production.

Small teams handle context manually. Then traffic triples and the whole system buckles. These are the failure modes to plan for.

If your context layer can't describe what it contains, your policy engine can't do its job. Semantic tagging fixes that.

RBAC exists for databases and APIs. It needs to exist for AI context too — and the implementation isn't as obvious as you'd think.

Compliance logs that just say "model ran" are useless. Here's what a useful AI audit trail actually needs to capture.

We've talked to hundreds of teams. The same five problems show up over and over. Here's what they are and how to avoid them.

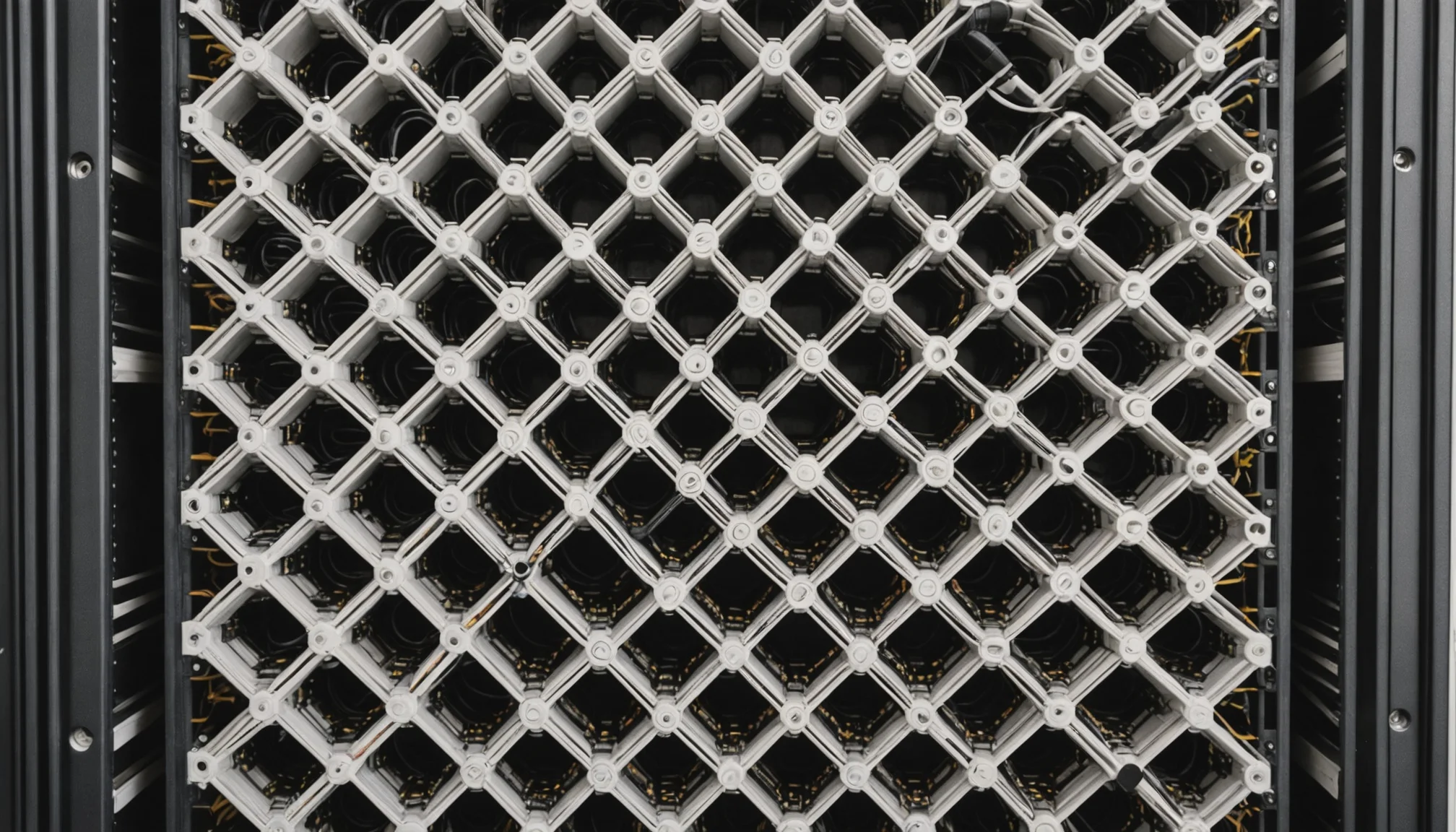

Governance at inference speed is possible. These are the architectural decisions that get you there without compromising enforcement quality.

Prompt engineering shapes how the model responds. Context governance controls what it knows. They're complementary, not interchangeable.

How Meibel's context processing pipeline works under the hood — from ingestion through policy evaluation to governed delivery.